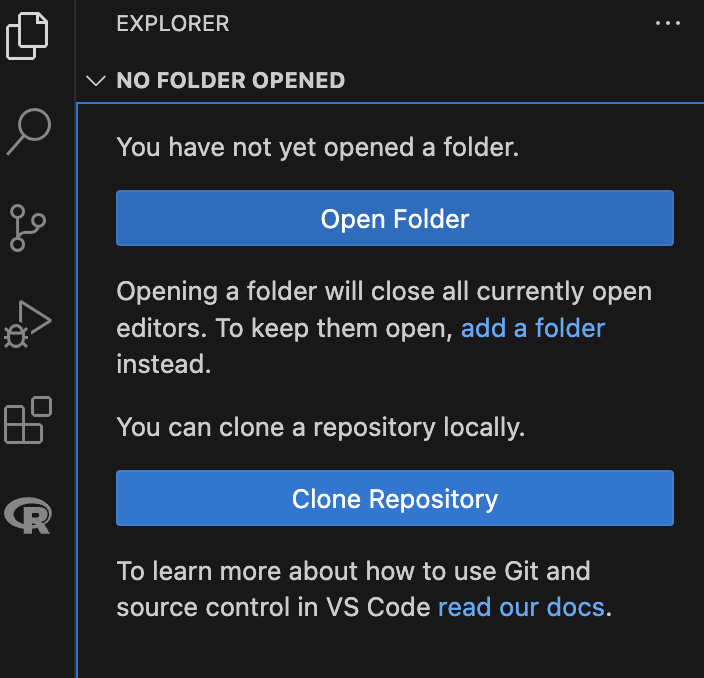

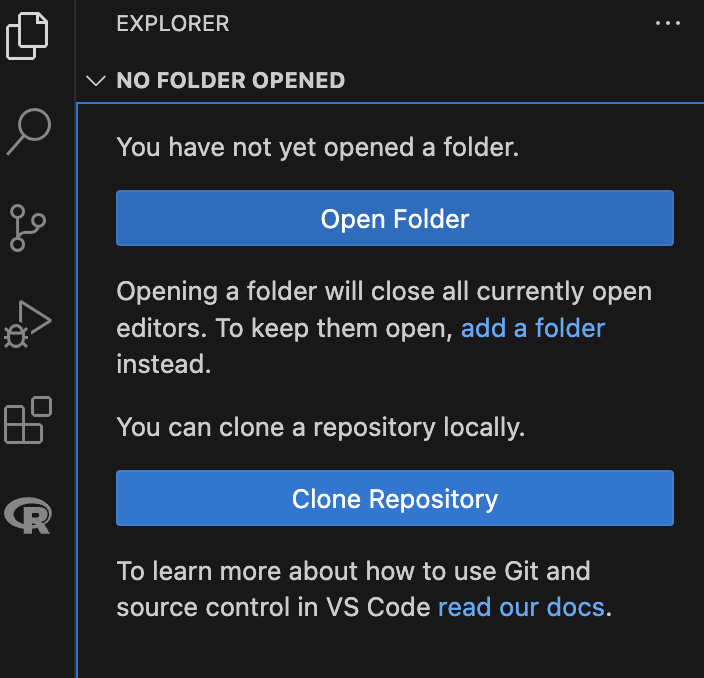

class: middle, inverse, title-slide .title[ # Intro to Python - Session 2 ] .subtitle[ ## <html><br /> <br /> <hr color='#EB811B' size=1px width=796px><br /> </html><br /> Bioinformatics Resource Center - Rockefeller University ] .author[ ### <a href="http://rockefelleruniversity.github.io/Intro_To_Python/" class="uri">http://rockefelleruniversity.github.io/Intro_To_Python/</a> ] .author[ ### <a href="mailto:brc@rockefeller.edu" class="email">brc@rockefeller.edu</a> ] --- ``` ## Using virtual environment '/github/home/.virtualenvs/r-reticulate' ... ``` --- ## Recap Session 1 covered introduction to R data types and import/export of data. - [Background to Python](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session1.html#Background_of_Python) - [Set up](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session1.html#Set_Up) - [Variables and Functions](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session1.html#Variables_and_Functions) - [Data Objects](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session1.html#Data_Objects) - [Custom Functions](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session1.html#Custom_Functions) - [Control Statements](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session1.html#Control_Statements) --- ## Session Overview - [Course Home Page](http://rockefelleruniversity.github.io/Intro_To_Python/) - [Reading and Writing Files](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session2.html#Reading_and_Writing_Files) - [Scripts](https://rockefelleruniversity.github.io/Intro_To_Python/presentations/singlepage/Session2.html#Scripts) --- class: inverse, center, middle # Reading and Writing Files <html><div style='float:left'></div><hr color='#EB811B' size=1px width=720px></html> --- ## Reading/writing files Most of the time, you will not be generating data in Python but will be importing data from external files. A standard format for this data is a table: <table> <thead> <tr> <th style="text-align:left;"> Gene_Name </th> <th style="text-align:right;"> Sample_1.hi </th> <th style="text-align:right;"> Sample_2.hi </th> <th style="text-align:right;"> Sample_3.hi </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Gene_a </td> <td style="text-align:right;"> 4.557475 </td> <td style="text-align:right;"> 4.853973 </td> <td style="text-align:right;"> 3.713313 </td> </tr> <tr> <td style="text-align:left;"> Gene_b </td> <td style="text-align:right;"> 2.946111 </td> <td style="text-align:right;"> 3.959567 </td> <td style="text-align:right;"> 3.452269 </td> </tr> <tr> <td style="text-align:left;"> Gene_c </td> <td style="text-align:right;"> 3.391021 </td> <td style="text-align:right;"> 5.548094 </td> <td style="text-align:right;"> 2.957028 </td> </tr> <tr> <td style="text-align:left;"> Gene_d </td> <td style="text-align:right;"> 3.536898 </td> <td style="text-align:right;"> 5.968292 </td> <td style="text-align:right;"> 2.874046 </td> </tr> <tr> <td style="text-align:left;"> Gene_e </td> <td style="text-align:right;"> 9.539782 </td> <td style="text-align:right;"> 10.676720 </td> <td style="text-align:right;"> 9.979983 </td> </tr> <tr> <td style="text-align:left;"> Gene_f </td> <td style="text-align:right;"> 12.132165 </td> <td style="text-align:right;"> 9.201656 </td> <td style="text-align:right;"> 10.520746 </td> </tr> <tr> <td style="text-align:left;"> Gene_g </td> <td style="text-align:right;"> 8.484076 </td> <td style="text-align:right;"> 10.181807 </td> <td style="text-align:right;"> 10.108792 </td> </tr> <tr> <td style="text-align:left;"> Gene_h </td> <td style="text-align:right;"> 9.848261 </td> <td style="text-align:right;"> 11.100236 </td> <td style="text-align:right;"> 9.814269 </td> </tr> </tbody> </table> --- ## Working Directory If you are reading in data, you need to know from which vantage point your computer is looking. The view point of your computer is the Working Directory.  --- ## *os* package There are several utilities in the os (operating system) package. First lets check our current Working Directory using `getcwd`. ``` python import os ``` ``` python os.getcwd() ``` ``` ## '/Users/mattpaul/' ``` --- ## Setting my Working Directory The working directory is based on your VS Code workspace. If you have not set this you can do so using the *Explorer* tab on the left of VS Code. If the workspace is in the wrong place, you can open a new window, set up a new workspace, then open a new python console. Let's navigate to the course material that we downloaded. There are some example data sets there: *Intro_To_Python-master/r_course/*  --- ## Working Directory You can also directly change your Working Directory in python using the `chdir` function. ``` python os.chdir("/Users/mattpaul/Downloads/Intro_To_Python-master/r_course/") ``` ``` python os.listdir() ``` ``` ## ['presRaw', 'customCSS', 'Descriptions', 'GeneNames_highexpression.txt', 'imgs', '_course.yml', 'data'] ``` ``` python os.listdir("data") ``` ``` ## ['subset_dataset.py', 'Final Project', 'GeneExpressionWithMethods.txt', 'GeneExpression.txt', 'readThisTable.csv', 'ToRead.csv', 'gene_expression.csv', 'myresult.RData', 'subset_dataset_args.py', 'GeneNames.txt', 'height-weight.csv', 'height-weight_female.csv', 'gene_data.csv', 'Data Visualization'] ``` --- ## Paths When you give a path to python it can either be relative or absolute.  --- ## Paths To use our map analogy: - Relative path are like directions i.e. take a left, go straight then take a right etc. The context of where you start is essential. - Absolute paths are like an address. They give the final location in absence of any other external information. Both have their benefits. --- ## Paths in use The command we used before was using an absolute path. Typically they start with "/" to get to the top level of your computers file structure. ``` python os.chdir("/Users/mattpaul/Downloads/Intro_To_Python-master/r_course/") ``` This absolute pathway is very precise but it contains specific information about my computer. This means this will only work on this computer. Given that we started at: */Users/mattpaul*, we could have also used a relative path. This uses the knowledge that we are in that start position to find where we are going. ``` python os.chdir("Downloads/Intro_To_Python-master/r_course/") ``` If we ensure the code is run from an equivalent position, than we can use relative paths across computers. --- ## Back to Reading There are many ways to read in data. Most of the time we are importing simple 2D tables, so we want to generate a NumPy array using the `genfromtxt()` function. Here we are reading a csv file (Comma-Separated Values). This means each value in our file is separated by a comma. So when we read the file we will specify that the delimiter is a comma. We can use the relative path from our new working directory to find it. ``` python import numpy as np my_table = np.genfromtxt("data/ToRead.csv", delimiter=",") type(my_table) ``` ``` ## <class 'numpy.ndarray'> ``` ``` python my_table ``` ``` ## array([[ 4.5702372 , 3.23046698, 3.35182734, 3.93087741, 4.09824666, ## 4.41872599], ## [ 3.56173302, 3.63228533, 3.58752332, 4.18528704, 1.38097605, ## 5.93699012], ## [ 3.79727358, 2.87446167, 4.01691555, 4.17577191, 1.98826299, ## 3.78091724], ## [ 3.39824235, 4.41520211, 4.89356109, 8.4323419 , 9.60915099, ## 9.01986467], ## [10.12878671, 10.22407116, 8.94581254, 2.93617444, 3.89292402, ## 0.91920785], ## [ 8.4743968 , 8.612628 , 7.17082986, 3.29935093, 2.57586964, ## 2.54277726], ## [10.01002009, 10.31235402, 11.60328984, 9.93070417, 7.74879528, ## 9.79882378], ## [ 9.39999242, 10.33284375, 9.37821714, 10.06519963, 10.78861857, ## 10.24532579]]) ``` --- ## Reading Here we try to read in a more complex file. This data is a mixture of characters and numbers. The `dtype` argument can be used to specify the [format of the data](https://numpy.org/doc/stable/reference/arrays.dtypes.html#arrays-dtypes) we are reading in. This data also has column titles, so we will skip over those with `skip_header`. The data set contains: * **Sex** - **U**nicode string * **Height** - **f**loat * **Weight** - **f**loat ``` python dtype = ['U10' , 'f', 'f' ] my_table2 = np.genfromtxt('data/height-weight.csv', delimiter = ",", skip_header=True, dtype=dtype) ``` --- ## Reading When you read in an array with multiple data formats it does not automatically make a 2D array, instead a 1D array containing other arrays. ``` python my_table2 ``` ``` ## array([('Male', 182.87, 76.57), ('Male', 179.12, 80.43), ## ('Male', 169.15, 75.48), ('Male', 175.66, 94.54), ## ('Female', 164.47, 71.78), ('Female', 158.27, 69.9 ), ## ('Female', 161.69, 68.85), ('Female', 165.84, 70.44), ## ('Male', 181.32, 76.9 ), ('Male', 167.37, 79.06), ## ('Female', 160.06, 72.37), ('Female', 166.48, 67.34), ## ('Male', 175.39, 92.22), ('Female', 164.7 , 75.69), ## ('Female', 163.79, 65.76), ('Male', 181.13, 72.33), ## ('Male', 169.24, 73.3 ), ('Male', 176.22, 97.67), ## ('Male', 174.09, 72.2 ), ('Male', 180.11, 75.72), ## ('Male', 179.24, 75.54), ('Female', 161.92, 69.92), ## ('Male', 169.85, 90.63), ('Female', 160.57, 63.54), ## ('Female', 168.24, 69.57), ('Male', 177.75, 74.84), ## ('Male', 183.21, 83.36), ('Male', 167.75, 82.06), ## ('Male', 181.15, 83.93), ('Male', 181.56, 79.54), ## ('Female', 160.03, 64.3 ), ('Male', 165.62, 76.72), ## ('Male', 181.64, 96.91), ('Female', 159.67, 71.88), ## ('Male', 177.03, 74.04), ('Female', 163.35, 70.46), ## ('Male', 175.21, 83.65), ('Female', 160.8 , 64.77), ## ('Male', 166.46, 76.83), ('Female', 157.95, 67.41), ## ('Male', 180.61, 83.59), ('Female', 159.52, 67.99), ## ('Female', 163.01, 65.19), ('Female', 165.8 , 71.77), ## ('Female', 170.03, 66.68), ('Female', 157.16, 69.64), ## ('Female', 164.58, 72.99), ('Female', 163.47, 72.89), ## ('Male', 185.43, 87.23), ('Female', 165.34, 70.84), ## ('Female', 163.45, 67.67), ('Female', 163.97, 66.71), ## ('Female', 161.38, 73.55), ('Female', 160.09, 65.93), ## ('Male', 178.64, 97.05), ('Female', 159.78, 68.31), ## ('Female', 161.57, 67.92), ('Female', 161.83, 66.03), ## ('Male', 169.66, 77.3 ), ('Male', 166.84, 88.25), ## ('Female', 159.32, 64.92), ('Male', 170.51, 84.35), ## ('Female', 161.84, 69.97), ('Male', 171.41, 81.7 ), ## ('Male', 166.75, 79.06), ('Female', 166.19, 67.46), ## ('Male', 169.16, 90.08), ('Female', 157.01, 66.56), ## ('Male', 167.51, 84.15), ('Female', 160.47, 68.2 ), ## ('Female', 162.33, 66.47), ('Male', 175.67, 88.82), ## ('Male', 174.25, 80.93), ('Female', 158.94, 65.14), ## ('Male', 172.72, 67.62), ('Female', 159.23, 69.96), ## ('Male', 176.54, 90.76), ('Male', 184.34, 90.41), ## ('Female', 163.94, 71.47), ('Female', 160.09, 68.94), ## ('Female', 162.32, 72.72), ('Female', 162.59, 69.76), ## ('Male', 171.94, 82.11), ('Female', 158.07, 69.8 ), ## ('Female', 158.35, 69.72), ('Female', 162.18, 67.81), ## ('Female', 159.38, 70.37), ('Male', 171.45, 84.29), ## ('Female', 163.17, 64.47), ('Male', 183.1 , 82.47), ## ('Male', 177.14, 88.7 ), ('Male', 171.08, 72.51), ## ('Female', 159.33, 70.68), ('Male', 185.43, 73.63), ## ('Female', 162.65, 73.99), ('Female', 159.44, 66.21), ## ('Female', 164.11, 70.66), ('Female', 159.13, 66.96), ## ('Female', 160.58, 71.49), ('Female', 164.88, 68.07)], ## dtype=[('f0', '<U10'), ('f1', '<f4'), ('f2', '<f4')]) ``` --- ## Reading While reading we can also assign our data into multiple objects using the `unpack` argument. Here we assign each column into it's own separate array. ``` python sex, height, weight = np.genfromtxt('data/height-weight.csv', unpack = True, delimiter = ",", skip_header=True, dtype=dtype) sex ``` ``` ## array(['Male', 'Male', 'Male', 'Male', 'Female', 'Female', 'Female', ## 'Female', 'Male', 'Male', 'Female', 'Female', 'Male', 'Female', ## 'Female', 'Male', 'Male', 'Male', 'Male', 'Male', 'Male', 'Female', ## 'Male', 'Female', 'Female', 'Male', 'Male', 'Male', 'Male', 'Male', ## 'Female', 'Male', 'Male', 'Female', 'Male', 'Female', 'Male', ## 'Female', 'Male', 'Female', 'Male', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Male', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Male', 'Female', 'Female', 'Female', ## 'Male', 'Male', 'Female', 'Male', 'Female', 'Male', 'Male', ## 'Female', 'Male', 'Female', 'Male', 'Female', 'Female', 'Male', ## 'Male', 'Female', 'Male', 'Female', 'Male', 'Male', 'Female', ## 'Female', 'Female', 'Female', 'Male', 'Female', 'Female', 'Female', ## 'Female', 'Male', 'Female', 'Male', 'Male', 'Male', 'Female', ## 'Male', 'Female', 'Female', 'Female', 'Female', 'Female', 'Female'], ## dtype='<U10') ``` --- ## Reading An alternative to setting the data type explicitly, you can let genfromtxt determine it automatically by setting *dtype* to `None` and *encoding* to `None`. This works pretty well most of the time. ``` python sex, height, weight = np.genfromtxt('data/height-weight.csv', delimiter = ",", skip_header=True, unpack = True, dtype=None, encoding=None) sex ``` ``` ## array(['Male', 'Male', 'Male', 'Male', 'Female', 'Female', 'Female', ## 'Female', 'Male', 'Male', 'Female', 'Female', 'Male', 'Female', ## 'Female', 'Male', 'Male', 'Male', 'Male', 'Male', 'Male', 'Female', ## 'Male', 'Female', 'Female', 'Male', 'Male', 'Male', 'Male', 'Male', ## 'Female', 'Male', 'Male', 'Female', 'Male', 'Female', 'Male', ## 'Female', 'Male', 'Female', 'Male', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Male', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Male', 'Female', 'Female', 'Female', ## 'Male', 'Male', 'Female', 'Male', 'Female', 'Male', 'Male', ## 'Female', 'Male', 'Female', 'Male', 'Female', 'Female', 'Male', ## 'Male', 'Female', 'Male', 'Female', 'Male', 'Male', 'Female', ## 'Female', 'Female', 'Female', 'Male', 'Female', 'Female', 'Female', ## 'Female', 'Male', 'Female', 'Male', 'Male', 'Male', 'Female', ## 'Male', 'Female', 'Female', 'Female', 'Female', 'Female', 'Female'], ## dtype='<U6') ``` --- ## Quick Recap - Array Filtering Once you have performed some analysis you will want to export it into a new file. Let's do some quick filtering of the data based on what we learned yesterday. We can then export the results later. First we will make a 2D array. ``` python new_array = np.array([sex, height, weight]) ``` Next we do a logical test to see which entries in our first row are Female. ``` python to_subset = new_array[0,:]=="Female" to_subset ``` ``` ## array([False, False, False, False, True, True, True, True, False, ## False, True, True, False, True, True, False, False, False, ## False, False, False, True, False, True, True, False, False, ## False, False, False, True, False, False, True, False, True, ## False, True, False, True, False, True, True, True, True, ## True, True, True, False, True, True, True, True, True, ## False, True, True, True, False, False, True, False, True, ## False, False, True, False, True, False, True, True, False, ## False, True, False, True, False, False, True, True, True, ## True, False, True, True, True, True, False, True, False, ## False, False, True, False, True, True, True, True, True, ## True]) ``` --- ## Quick Recap We can use the boolean array we created to subset our array, based on whether they are `Female` or not. ``` python subset_array = new_array[:,to_subset] subset_array ``` ``` ## array([['Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female', 'Female', 'Female', 'Female', 'Female', 'Female', ## 'Female'], ## ['164.47', '158.27', '161.69', '165.84', '160.06', '166.48', ## '164.7', '163.79', '161.92', '160.57', '168.24', '160.03', ## '159.67', '163.35', '160.8', '157.95', '159.52', '163.01', ## '165.8', '170.03', '157.16', '164.58', '163.47', '165.34', ## '163.45', '163.97', '161.38', '160.09', '159.78', '161.57', ## '161.83', '159.32', '161.84', '166.19', '157.01', '160.47', ## '162.33', '158.94', '159.23', '163.94', '160.09', '162.32', ## '162.59', '158.07', '158.35', '162.18', '159.38', '163.17', ## '159.33', '162.65', '159.44', '164.11', '159.13', '160.58', ## '164.88'], ## ['71.78', '69.9', '68.85', '70.44', '72.37', '67.34', '75.69', ## '65.76', '69.92', '63.54', '69.57', '64.3', '71.88', '70.46', ## '64.77', '67.41', '67.99', '65.19', '71.77', '66.68', '69.64', ## '72.99', '72.89', '70.84', '67.67', '66.71', '73.55', '65.93', ## '68.31', '67.92', '66.03', '64.92', '69.97', '67.46', '66.56', ## '68.2', '66.47', '65.14', '69.96', '71.47', '68.94', '72.72', ## '69.76', '69.8', '69.72', '67.81', '70.37', '64.47', '70.68', ## '73.99', '66.21', '70.66', '66.96', '71.49', '68.07']], ## dtype='<U32') ``` --- ## Writing files To export the subset array with the `savetxt` function. This has similar arguments to `genfromtxt`. A key addition is `fmt`, the format in which the data is saved. You can specify scientific notation, significant digits or in our case simply that we will use a string. ``` python np.savetxt("height-weight_female.csv", subset_array, delimiter=',', fmt='%s') ``` --- ## Complex reading/writing Though a lot of the time we may use these NumPy approaches, there are specific functions dedicated to a variety of data types. The most common is [pandas](https://pandas.pydata.org/docs/getting_started/index.html#getting-started). This is a specialized library for managing data frames. These are similar to arrays but a little more flexible for multiple data types. It also has the ability to read/write from excel spreadsheets. [BioPython](https://biopython.org/) and [BioNumPy](https://bionumpy.github.io/bionumpy/menu.html) have a range of utilities for managing biological data types i.e. fasta, fastq, etc. --- class: inverse, center, middle # Running Scripts <html><div style='float:left'></div><hr color='#EB811B' size=1px width=720px></html> --- ## Scripts So far we have predominately been working interactively with the console: asking it questions and getting answers back immediately. When you want to run all your code, or if you want to start working on automation you can run a whole script instead. In this case we have all our code written out in a *.py document. This is good practice for matured analysis that you have finalized to ensure that you have everything properly documented. Lets have a look at an example python script. ``` data/subset_dataset.py ``` --- ## Example Script This is our script: ``` python import numpy as np # Read in dataset sex, height, weight = np.genfromtxt('data/height-weight.csv', delimiter = ",", skip_header=True, unpack = True, encoding=None, dtype=None) new_array = np.array([sex, height, weight]) # Subset array to_subset = new_array[0,:]=="Female" subset_array = new_array[:,to_subset] # Print out number of Females in data set and export subset print(str(sum(to_subset)) + " are female") np.savetxt("data/height-weight_female.csv", subset_array, delimiter=',', fmt='%s') ``` --- ## Comments We can use the number/pound/hash sign to indicate that everything subsequent is "commented out". This means that python will not evaluate these sections. This gives us room to annotate our code. Comments are a core part of good coding etiquette. If you are sharing scripts or need to figure out what you did at a later date it helps to have a short statement to explain each step of what you are doing. The longer and more complex your code gets, the longer and more complex your comments should get. --- ## Running scripts There are a couple of options for running scripts. In VS Code we can simply press the *Run* button. It looks like a Play icon.  --- ## Running scripts VS code is helping us out here by running the script without us having to directly work in terminal to initiate python. You can see what is doing in terminal though when this runs. More traditionally we would invoke python directly and provide the script. You will have to do this directly if you want to run a more complex script i.e. one that takes arguments. ``` /Users/mattpaul/Deskt op/miniconda3/envs/intro_to_python/bin/python /Users/mattpaul/Documents/RU/Train ing/Intro_To_Python/r_course/data/subset_dataset.py ``` --- ## Passing Arguments To use arguments we first have to modify our script. Arguments are parsed by the *sys* library. *sys* stores them in *sys.argv*. The first entry is the scripts name. Subsequent entries are the different arguments. ``` python import sys import numpy as np print("My Script Name:", sys.argv[0]) print("My Argument:", sys.argv[1]) arg1 = sys.argv[1] # Read in dataset sex, height, weight = np.genfromtxt('data/height-weight.csv', delimiter = ",", skip_header=True, unpack = True, encoding=None, dtype=None) new_array = np.array([sex, height, weight]) # Subset array to_subset = new_array[0,:]==arg1 subset_array = new_array[:,to_subset] # Print out number of Females in data set and export subset print(str(sum(to_subset)) + " are " + arg1) np.savetxt("data/height-weight_"+arg1+".csv", subset_array, delimiter=',', fmt='%s') ``` --- ## Passing Arguments To pass the argument to our script when we run it, we simply add it after our python script. ``` /Users/mattpaul/Desktop/miniconda3/envs/intro_to_python/bin/python /Users/mattpaul/Documents/RU/Training/Intro_To_Python/r_course/data/subset_dataset_args.py Male ``` --- ## Script Messages You should see that when this runs we create a new file. Also even though we do not have python open, the print statement is returned to the terminal. When you have long and complex scripts these statements become even more important as checkpoints to make sure your code is working as expected. Any important result should be saved as a document though as these screen messages do not persist. --- ## An extra note Keeping Your code nice can be annoying. There exists many ways in which to store your code. Most of the time we are not writing scripts that are production level. Instead you will be doing an analysis of a data set and making decisions in an interactive manner. Notebooks give you a means to tie the code, the analysis decisions and the result of the code together into a single file. There are several options for python. But Jupyter Notebook is best known for Python. Quarto is growing in popularity as its universal and language agnostic. --- ## Time for an exercise! Exercise on the data types we have covered so far can be found [here](../../exercises/exercises/Exercise5_exercise.html) --- ## Answers to the exercise Answers can be found here [here](../../exercises/answers/Exercise5_answers.html) --- ## Further Support When you hit bugs: * Google/ChatGPT/Claude, etc. * Stackoverflow * Biostars * Reach out on [GitHub](https://github.com/RockefellerUniversity/Intro_To_Python/issues) Other Reference Material: * [Harvard's Python Course](https://cs50.harvard.edu/python/2022/) * [Geeks For Geeks](https://www.geeksforgeeks.org/getting-started-with-python-programming/)